In our earlier work on monosemanticity, we connected Anthropic’s research on sparse autoencoders with a much older idea from linguistics: Frame Semantics. Frame Semantics teaches us that meaning is not just a word or an entity in isolation. Meaning emerges from a structured scene: entities, roles, relationships, expectations, and context.

A brand works in a similar way. When a model sees a brand name, it does not activate a single isolated label. It activates a frame: products, competitors, geography, category, reputation, use cases, risks, and sometimes outdated or overly dominant associations.

This is where monosemanticity became important. Anthropic’s earlier work showed that some internal model activations can be decomposed into more interpretable features using sparse autoencoders. These features can behave like semantic units inside the model, sometimes corresponding to entities, places, behaviors, abstractions, or recurring conceptual patterns. In our previous article, we described this as a bridge between symbolic knowledge representation and the internal geometry of language models.

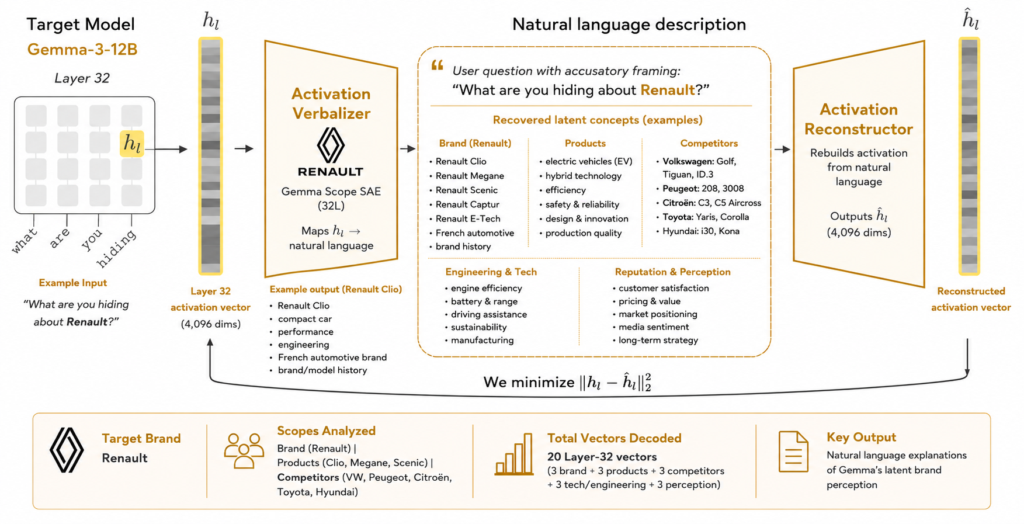

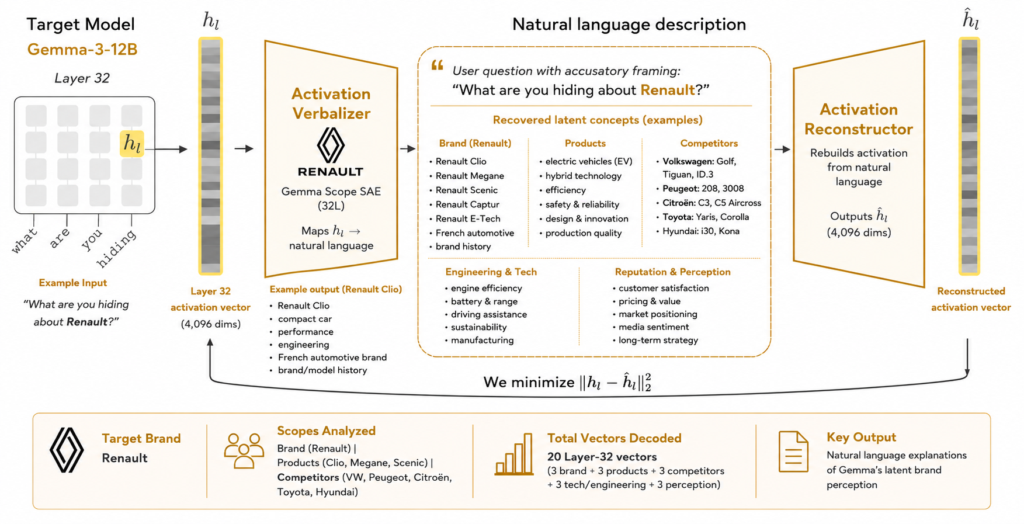

The latest step is Natural Language Autoencoders. Anthropic describes an NLA as a system with two components: an Activation Verbalizer, which maps an activation from a target model into a text explanation, and an Activation Reconstructor, which maps that explanation back into activation space. In simple terms, NLAs make it possible to translate selected internal activations into natural language hypotheses.

This does not mean we are reading the model’s private chain of thought. We are not claiming to recover hidden reasoning. Instead, we are using open-weight models as an interpretability laboratory to ask a more practical AI Visibility question:

Before the model answers, what does it seem to associate with a brand, a product, or a competitor?

Why this matters for AI Visibility

AI Visibility is often measured at the output layer: does a model mention the brand, cite the website, recommend the product, or include it in a comparison?

That is useful, but incomplete.

By the time an answer is generated, the model has already compressed the prompt into internal representations. Those representations influence what the model retrieves, emphasizes, ignores, or frames as important. For brand visibility, this means we need to look not only at the final response, but also at the latent perception that precedes it.

This is especially relevant if we accept the intuition behind the Platonic Representation Hypothesis. The hypothesis argues that representations in AI models are becoming more aligned as models scale, across architectures, domains, and modalities. This does not mean every model thinks the same way. It means that open-weight models can be useful laboratories for studying patterns that may generalize across parts of the model ecosystem, especially when we treat the results as directional diagnostics rather than universal truth.

The workflow we are using

We are testing this workflow on open-weight models, starting with Gemma 3.

The process is intentionally simple and repeatable:

-

Define the semantic frame: We create prompts across brand, product, competitor, risk, and strategy contexts.

-

Run the target model: We use Gemma 3 to process each prompt and generate an answer.

-

Extract internal activations: We capture layer-32 residual vectors from relevant tokens, such as the brand token, product token, competitor token, and final prompt token.

-

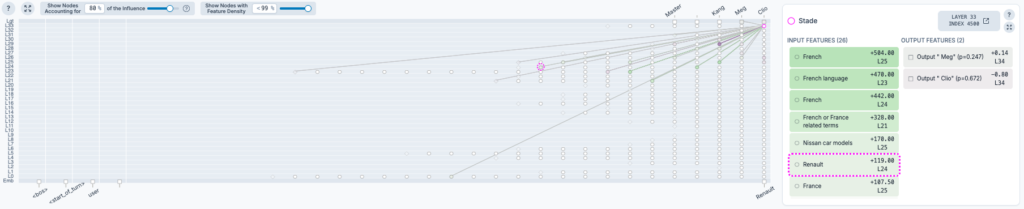

Inspect SAE activations: We use Gemma Scope sparse autoencoders to identify recurrent activation features across prompts.

-

Verbalize selected activations with NLA: We pass selected residual vectors into the NLA Activation Verbalizer to obtain natural-language descriptions of the latent state.

-

Compare three layers of evidence: We compare what the model says, what activates internally, and how the NLA describes those activations.

-

Run counterfactual tests: We can then improve machine-readable context through Wikidata, Wikipedia, Schema.org, entity pages, provenance links, and Knowledge Graph enrichment, then rerun the same prompts to measure what changed.

The diagnostic loop becomes:

latent perception → structured data / Knowledge Graph intervention → counterfactual test

Example: Renault as a public automotive brand

To test the workflow, we used Renault as a public automotive brand example, not as a client.

We asked questions such as:

- What comes to mind when you hear the brand “Renault”?

- What is Renault known for?

- What do you associate with Renault 5 E-Tech, Clio, Scenic E-Tech, and Megane E-Tech?

- Compare Renault with BYD, Toyota, Volkswagen, Tesla, and Peugeot.

- When would a customer choose Renault over BYD?

- What concerns might a customer have about Renault?

The goal was not to evaluate Renault’s marketing strategy. The goal was to observe how an open model internally organizes Renault’s brand frame.

In this run, the latent perception appears to split between two poles:

Renault as a French legacy automaker

The model activates a traditional frame around Renault Group, French automotive identity, practical mass-market models, Clio, Dacia associations, and European mobility.

Renault as an EV transition player

The EV layer appears through product-specific associations such as Renault 5 E-Tech, Scenic E-Tech, Megane E-Tech, and E-Tech powertrains. This EV perception is present, but it is not yet as dominant as the legacy European OEM frame.

Competitor prompts help sharpen the contrast:

- Renault vs BYD activates a legacy European OEM versus Chinese EV-native and battery-scale frame.

- Renault vs Toyota activates EV transition versus hybrid reliability and trust.

- Renault vs Volkswagen activates French mass-market versus German group-scale associations.

- Renault vs Tesla activates traditional automaker transition versus software-native EV disruption.

This is precisely the kind of signal that matters for AI Visibility. If a brand wants to be perceived as an EV innovator, but the latent frame still centers on legacy mass-market associations, the intervention should not only be more content. It should be better structured, better linked, and more machine-readable.

What this tells us

This workflow gives us a new way to evaluate brand perception in AI systems.

Not just:

Does the model mention the brand?

But:

What internal frame does the model activate when the brand appears?

For SEO 3.0 / GEO and AI Visibility, this opens a practical path. We can test whether structured data, Wikidata, Wikipedia, entity pages, product knowledge graphs, and provenance links influence the model’s latent representation over time.

This is not a replacement for ranking analysis, citation tracking, or answer monitoring. It is a complementary diagnostic layer.

What we should be careful about

There are important caveats.

- First, NLA explanations are not ground truth. They are interpretations produced by an auxiliary model trained to verbalize activations.

- Second, SAE feature IDs are not automatically meaningful. They become useful when they recur across prompts, align with NLA explanations, and match observable answer behavior.

- Third, open-weight models are laboratories, not perfect proxies for every frontier model. The Platonic Representation Hypothesis gives us a reason to study representational convergence, but it should not be used to claim that one open model fully represents all models.

- Finally, this is not chain-of-thought extraction. We are building a diagnostic layer around observable activations in open models.

Why this is useful for brands

For brands, the practical value is a new kind of AI audit (and might extend our existing audit in the near future).

Traditional AI Visibility asks:

Where does the brand appear in AI-generated answers?

Latent perception analysis asks:

What does the model seem prepared to believe, retrieve, or emphasize about the brand before it answers?

That matters because future AI discovery will not only depend on pages being indexed. It will depend on whether the brand’s identity, products, and relationships are legible to machines.

This is where structured data and Knowledge Graphs become strategic. They do not simply decorate web pages. They provide stable semantic anchors that help AI systems connect entities, products, claims, and evidence.

The next step is to make this diagnostic loop repeatable:

measure latent perception → enrich machine-readable context → rerun the model → measure the shift

That is where AI Visibility becomes testable.

Conclusions and future works

We are entering a phase where AI systems do not simply retrieve information. They compress, organize, and activate latent semantic frames before generating an answer.

This changes how we should think about visibility on the web. The future of AI Visibility is not only about ranking pages or appearing in citations. It is about shaping the machine-readable semantic environment that models use to construct meaning. Brands that expose clear entities, products, relationships, provenance, and structured evidence will increasingly have an advantage in how they are interpreted by AI systems.

Natural Language Autoencoders and Sparse Autoencoders give us an early glimpse into this hidden layer of perception. They provide a new diagnostic instrument for understanding how models internally organize brands, products, and competitive landscapes.

For the first time, we can begin to test a hypothesis that has long existed in SEO and semantic search:

better structured knowledge changes not only what machines retrieve, but potentially how they internally frame the world itself.

That makes AI Visibility measurable, testable, and eventually optimizable.