The difference between AI and AI + KG is the difference between a brilliant consultant who just walked through the door and one who has worked inside your organisation for a decade. Both are smart, but only one truly understands your system, history and context.

This guide makes one central argument: the companies building durable competitive advantage from AI are not the ones with access to the best LLMs. They are the ones who have connected those LLMs to a structured, machine-readable representation of everything their organisation knows. In knowledge architecture terms, that structure is called a Knowledge Graph. In strategic terms, we call it Corporate Memory.

Every leadership meeting has a version of this conversation. The CTO has deployed a large language model. The CMO is watching AI-generated content flood the market. The Digital Director is fielding questions from Legal about data leaving the building. And the CEO is asking one simple question: why aren’t we seeing more ROI?

The answer, almost always, is the same: your AI doesn’t actually know your business.

An LLM trained on public internet data is extraordinarily good at sounding fluent. It can draft, summarise, translate, and reason with remarkable apparent competence. But fluency is not accuracy, and confidence is not truth. Without an anchor in your proprietary data—your products, your brand voice, your customer relationships, your competitive positioning—a standalone LLM is, at its core, a probabilistic guessing machine built on someone else’s knowledge.

The Illusion Of Generative AI Fluency

There is a seductive quality to generative AI that has misled countless business pilots: it always sounds right. Unlike a search engine that returns blue links and a blank expression, an LLM answers in the first person, in professional prose, with apparent certainty. It feels authoritative because it has absorbed the surface patterns of authoritative writing.

But this is a performance of competence, not competence itself. LLMs are trained to predict the most statistically probable next word. They are not trained to be accurate about your pricing, your product catalogue, your brand guidelines, your regulatory obligations, or your competitive context. When asked about these things, they do what probability engines do: they generate the most plausible-sounding answer, whether or not it corresponds to reality.

The AI Reality Gap

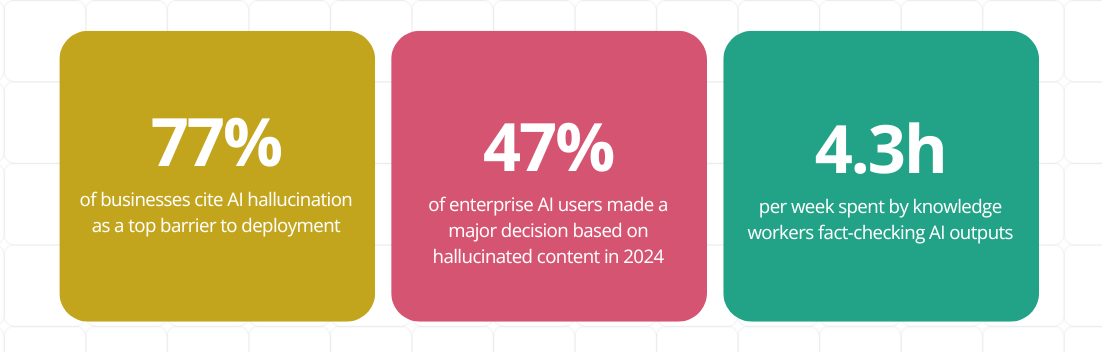

The technical term for this is hallucination. The business term for it is liability.

Sources: Deloitte 2025 AI Survey | Microsoft AI Hallucination Report 2025

The solution is not a better LLM. All major models will continue to hallucinate on tasks that require knowledge they don’t have. The solution is to stop asking the LLM to guess and start giving it something accurate to work with. That is precisely what a Knowledge Graph provides.

The Engine Vs. The Map: A Fundamental Shift

To understand the relationship between Large Language Models and Knowledge Graphs, it is helpful to use a simple analogy: the LLM is the engine, while the Knowledge Graph (KG) is the map. An engine provides the power to move, but without a map, it cannot ensure you reach the correct destination. In a business context, the destination is factual accuracy and strategic alignment.

The power of an LLM lies in its ability to process and generate natural language. However, an engine without a map is directionless. It relies on the probabilistic paths it learned during training, which are often outdated or disconnected from your current business reality. By introducing a Knowledge Graph, you provide the AI with a structured, verifiable navigation system that ensures every move it makes is grounded in reality.

Why LLMs Hallucinate By Design

Hallucination is not a bug in Large Language Models; it is a feature of how they function. Because these models are built on probability, they are optimized to generate plausible-sounding text, not necessarily factual text.

When asked about a specific proprietary product or internal policy that was not part of their training data, they fill in the gaps using the patterns they have learned from the public internet. For a business, this means your unique product benefits are often replaced by generic industry clichés, stripping away your competitive advantage and potentially misleading your customers.

What “Corporate Memory” Actually Means

The phrase Corporate Memory is not a metaphor. It describes a specific technical architecture in which everything your organisation knows—about its products, customers, markets, brand positioning, regulatory context, and competitive landscape—is represented as structured, interconnected, machine-readable knowledge.

This is distinct from a document repository, a CRM, or a data warehouse. Those systems store information. A Knowledge Graph represents meaning—the relationships between entities, the attributes that define them, and the context that makes a piece of information useful versus misleading.

When your AI operates on Corporate Memory, it does not need to guess that your flagship product is eco-certified, or that your brand voice avoids superlatives. It knows, because that knowledge is encoded in the graph. The result is output that is accurate, on-brand, and compliant, at scale.

AI Without a KG vs. AI With a KG: A Comparison

| Dimension | LLM Alone | LLM + Knowledge Graph |

| Data foundation | Public internet data, frozen at training cutoff | Your proprietary data, continuously updated and enriched |

| Accuracy on brand and product | Probabilistic — will hallucinate with confidence | Grounded in verified entity data — traceable to source |

| Competitive moat | None — all competitors access the same models | Compounding — your KG grows richer and more valuable over time |

| Brand voice | Generic — mirrors average internet tone and style | On-brand — grounded in your semantic layer and approved terminology |

| Data governance | Proprietary data at risk of leakage via prompts | Structured, permissioned, auditable data flows |

| Scalability | Content quality degrades at volume | Quality scales with KG richness — semantic precision maintained |

| ROI trajectory | Plateaus — output is commodity, correction costs rise over time | Compounding — each enrichment increases the value of every output |

Scaling Content With Semantic Precision

The content marketing challenge of the AI era is not generation—it is differentiation. Every competitor now has access to tools that can produce thousands of words on any topic in seconds. The question is no longer “can we produce more content?” It is “can we produce content that our competitors cannot replicate?”

The answer lies in semantic precision: the ability to generate content that is grounded in your specific entities, attributes, relationships, and brand voice. This is content that reflects genuine organisational knowledge rather than an internet-average approximation.

From Volume to Value: The Content Pipeline

A Knowledge Graph transforms AI from a one-off content generator into a structured content pipeline. Consider what becomes possible:

o Product descriptions at scale: Each description accurately reflects verified attributes — materials, certifications, compatibility, regulatory status — without human verification of every output.

o Personalised content variants: The KG encodes audience segments, channel preferences, and contextual relevance, enabling systematic personalisation rather than manual customisation.

o SEO-optimised entity coverage: Content is structured around semantic entities that search engines and AI answer engines can interpret unambiguously, improving discoverability in both traditional and generative search.

o Brand-consistent voice at volume: Tone, terminology, approved claims, and positioning are encoded in the semantic layer, not re-learned from scratch for each generation task.

o Automatic internal linking and cross-reference: The KG understands topical relationships, enabling AI to surface relevant cross-links without manual editorial intervention.

This is the content pipeline that CMOs are being asked to build – not a collection of AI tools, but an integrated knowledge architecture that transforms proprietary intelligence into scalable, differentiated output.

The Differentiation Imperative And Compounding Advantage

There is a structural reason why AI + KG creates a durable competitive advantage that AI alone cannot: the LLM is a commodity shared by every competitor. The Knowledge Graph is not. It is built from your data, structured around your entities, enriched by your domain expertise, and improved by your editorial decisions over time.

The Compounding Asset

The most strategically important characteristic of a Knowledge Graph is that it appreciates over time. Unlike a software tool that depreciates or a model subscription that delivers identical capability to every subscriber, a Knowledge Graph is a unique organisational asset.

Each enrichment—a new product added, a brand attribute refined, a customer relationship mapped—increases the value of every AI output subsequently generated from that graph. The organisation that began building its KG twelve months ago already has a structural advantage over one beginning today. The gap compounds.

This is the nature of Corporate Memory: it is not a project with a completion date. It is an ongoing investment in the semantic infrastructure that makes your AI uniquely capable of representing your business accurately. The earlier the investment begins, the wider the moat.

US vs. EU Market Perspectives

While the strategic value of AI + Knowledge Graphs is universal, the immediate pressures differ by region. Whether you are driving for market dominance in the US or navigating strict governance in the EU, the Knowledge Graph is the bridge between AI potential and business reality.

The US perspective: accelerating ROI and content velocity

In the US, the mandate is performance. Executives are under intense pressure to deploy AI faster and broader to capture market share. However, many AI projects fail to deliver production value because of the “accuracy gap”—where AI fluency doesn’t match business facts.

The AI + KG Solution: The Knowledge Graph transforms AI from an experimental cost into a compounding asset. By grounding models in proprietary data, US organizations can scale content and decision-making at 10x speed without sacrificing the truth. This architecture eliminates the expensive bottleneck of manual fact-checking.

The EU perspective: compliance, trust, and data sovereignty

In the EU, the mandate is governance. With the EU AI Act and GDPR, compliance is an active operational reality. Leaders must ensure that AI outputs are auditable, traceable, and respect data residency.

The AI + KG Solution: The Knowledge Graph provides architectural trust. It creates a structured, permissioned data layer where every AI output can be traced back to its source. This ensures that organizational intelligence remains sovereign and compliant, allowing EU businesses to leverage AI power without exposing proprietary data to public models.

| EU Executive NoteThe GDPR and EU AI Act do not prohibit the use of AI. They require that AI systems processing personal data operate with lawful basis, explainability, and governance. A Knowledge Graph does not create compliance — but it creates the structured, auditable knowledge infrastructure on which compliant AI can be built. For EU enterprises, this is not optional architecture; it is the foundation of defensible AI deployment. Note: The EU AI Act prohibited practices have been enforceable since February 2025. GPAI obligations apply from August 2025. Full high-risk AI system requirements take effect in August 2026. |

WordLift AI-Readiness Audit

Not sure where your organisation sits on the AI + KG readiness spectrum? WordLift’s AI-Readiness Audit provides a structured assessment of your current knowledge architecture, identifies the highest-value enrichment opportunities, and delivers a prioritised roadmap for building Corporate Memory. Run your AI Audit.

FAQs

I Can Use Chatgpt Or Gemini For A Small Monthly Fee. Why Should I Invest In Wordlift?

A basic subscription gives you access to a powerful engine, but it only knows public data. If you ask it to write about your specific services, it guesses based on industry averages, forcing your team to spend hours correcting it. You aren’t paying WordLift to replace these LLMs; you are investing in WordLift to build a Knowledge Graph that acts as your brand’s “brain.”

We connect this proprietary brain to those LLMs so that when they generate content, it is 100% accurate, automatically optimized for SEO, and ready to publish at scale. You are investing to eliminate the expensive bottleneck of manual AI verification.

Is Building A Knowledge Graph A Heavy Technical Lift?

No. With modern semantic SEO tools like WordLift, a Knowledge Graph can be built organically from your existing structured data, product feeds, and website content. It is designed to scale as your data updates, becoming more efficient and valuable as it grows. The implementation is often a matter of weeks, not months.