If you’re part of a brand or agency’s marketing team, you’re well aware of the race to AI citation share that’s been happening over the last couple of years. And if this is your first time hearing about it, that’s a mighty big rock you’ve been living under.

AI citation share (aka which sources AI models are pulling from when building their answers) has shifted dramatically over the past year:

- At first, LLMs were mainly pulling from publisher content thanks to the trust and authority layer.

- Then, social exploded (particularly thanks to YouTube Long Videos’ wealth of natural language content in the form of video transcripts).

- And now? Review sites and community platforms have stepped up to become the new frontier.

If you’re sitting there reading this thinking, “dude, I still haven’t even figured out the social piece,” you are far from alone in that sentiment. But… you’re also running out of time to catch up, because the game has already moved again.

Here’s what happened, why it happened, and where I think it’s going.

A Quick Primer on Why Citations Even Matter

This is a necessary explainer piece for this article (if you have AEO chops already, feel free to skip).

- In traditional SEO, you ranked. You climbed the results page. Users scrolled, clicked, and *hopefully* converted.

- AI search doesn’t work that way. When someone asks ChatGPT or Claude to recommend a new CRM platform for their organization, or asks AI Mode to explain global warming, the model constructs an answer, picks its own sources, and cites. Being referenced in that answer is the new version of ranking #1.

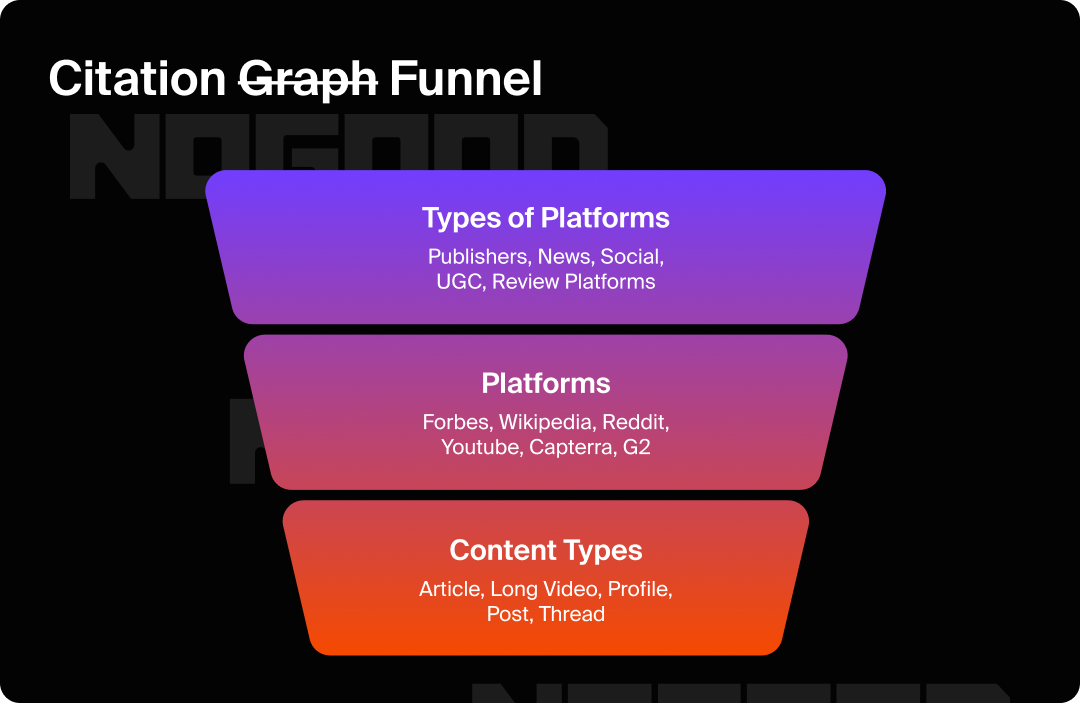

The types of platforms, specific platforms, and content types within those platforms that get cited are what has been coined as the “citation graph”; though it’s starting to look more like a funnel the more research is done on it.

And that graph is not static. It’s been rewritten multiple times in the past 18 months alone. Let’s build the timeline, shall we?

Phase 1: Editorial Was King (The Trust Era)

In the early days of generative AI search, models leaned heavily on what they’d been trained on: established publishers, authoritative editorial content, news sites, and long-form editorial. Think the New York Times, TechCrunch, Wirecutter.

In other words, the kind of stuff that’s been accumulating trust signals (from humans and search engines) for decades.

18 months ago, this made sense. LLMs were created to provide accurate answers to hyper-specific questions, and the most defensible measurement for accuracy at the time was domain authority and editorial credibility. If a source had a track record of producing reliable content, it was a safe bet.

The result was that if your brand wasn’t mentioned in earned media (press coverage, review roundups, authoritative third-party write-ups), you basically didn’t exist in AI answers.

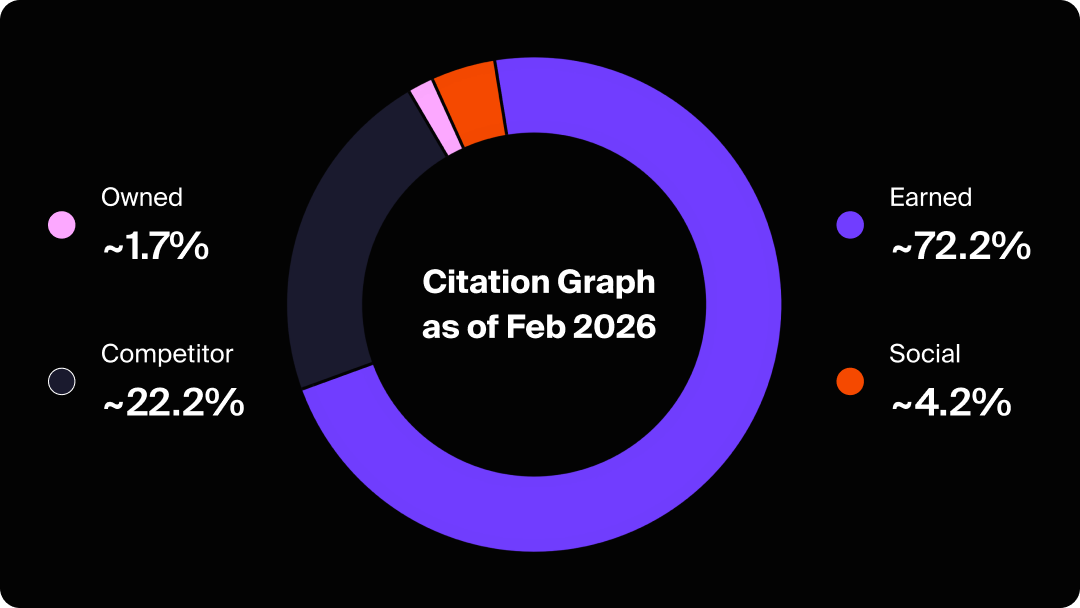

Admittedly, this part of the citation graph hasn’t changed all too much. Earned citations still dominate the overall citation universe today, accounting for roughly 72% of all AI citations according to Goodie’s research across 45.2 million citations.

But “dominating” doesn’t equal stagnant. While earned sources held their share, everything else started moving fast, too.

Phase 2: Social Exploded (The Authenticity Shift)

Starting in late 2025, something unexpected happened: social citations skyrocketed.

According to Goodie’s social citation study analyzing 6.1 million citations across 10 AI platforms, average daily social citations grew from roughly 719 per day in September 2025 to over 3,200 per day by December. That’s a 4x increase in about three months. Social as a share of total citations went from 2.1% to over 7% in the same window.

And it wasn’t just growth. It was a restructuring of what AI models considered “valid evidence.”

YouTube Led the Charge; Here’s Why

The biggest breaking news in this shift was YouTube overtaking Reddit as the top social citation source, climbing from 18.9% of social citations in August 2025 to 39.2% by December.

Here’s my theory on why (and, yes, Goodie’s data backs this up): AI models can’t actually watch videos; they can only read the transcripts. In an LLMs metaphorical eyes, a 15-minute YouTube video is actually thousands of words of text. And not only that, but that text is usually transcribed speech from a real human, talking through a real problem or opinion in their own words. It’s dense and it’s structured, which means it’s extractable.

Goodie’s follow-up study, a content type breakdown, confirms this pattern. YouTube Long Videos generated 574,420 social citations in that study. YouTube Shorts generated 11,160. That’s a 51-to-1 ratio on the same platform. The format that gives AI models the most structured, text-rich material to pull from wins. Every. Single. Time.

This is what Mostafa ElBermawy (shoutout boss) calls the “Extractability Principle“: long-form, text-dense content on a stable URL dominates AI citations because it gives models something concrete to work with. YouTube Long Videos have both of those things out the wazoo.

The Platform Coupling Wrinkle

One thing worth understanding (and honestly, this part is a little “duh” when you consider the legal and commercial relationships): not all social platforms are cited equally across all AI models.

“Platform coupling” (the structural relationship between a social platform and an AI model) determines a huge portion of citation behavior.

A few examples from the data:

- 99.7% of all X citations come from Grok. Not because X content is better. Because xAI owns X.

- 82.5% of all YouTube citations come from Google AI surfaces (AI Overviews, Gemini, AI Mode). Because Google owns YouTube.

- Instagram showed near-zero citations until October 2025, then emerged as a citation source almost entirely via Google’s AI Overview. Google started indexing Instagram in July 2025.

The citation graph isn’t purely meritocratic. It’s shaped by partnerships, licensing deals, and first-party ownership. And that goes both ways (it can help citation share and it can really throw a wrench in it). A prime example of this is when Reddit sued Perplexity for unauthorized scraping in October 2025; Perplexity’s Reddit citation share dropped 86% essentially overnight.

This matters because your “social strategy” can absolutely no longer be just about “reach” and “engagement”, and that’s actually been the case for a good while now.

Before we move on to Phase 3 and my predictions for what’s going to happen next (get excited) let’s recap where we’re at: trusted publisher citations still matter, yes, but now social media is getting its time in the spotlight in a big way, too.

Phase 3: Reviews Are Having a Moment (The Trust Vacuum)

Here’s where things get really interesting (and where I’ll admit that this is part data, part theory).

We’re now seeing AI models increasingly pull from review platforms: G2, Capterra, Trustpilot, and Reddit threads that function similarly. And I think the reason is something that a lot of us don’t want to say out loud: we’re at the point where the internet is so full of AI content that models are struggling to find sources that they can trust.

Publisher content, blog posts, and social posts are all increasingly susceptible to “AI-sloppification.” Anyone can open up ChatGPT or Claude and spin up 5,000 words of convincing-sounding content in an afternoon. But:

- On G2 or Capterra, you have to verify you’re a human before you can post a review. I know we all hate the Captcha games they make us play, but it worked against bots, and it works against LLMs.

- My additional theory regarding review sites is that people typically wouldn’t bother to use AI to write a product review (unless they’re being paid to, which is black hat marketing in itself). When people leave a review, they tend to feel strongly positive or negative towards a product. That’s why you don’t see 3-star reviews very often. They’re authentic because they’re impassioned.

- On Reddit, there’s a long history of friction (account history, karma, community norms) that makes it harder (not impossible, but wayyyy harder) to game their system with AI.

Here’s what I’m getting at: these platforms have become some of the last reliably human-generated content on the internet, and AI models have now figured that out.

As an aside into agentic commerce for just a second, Goodie’s agentic commerce research reinforces this: review volume and sentiment carry an 11% weight in product visibility for agentic AI shopping platforms, and third-party review platforms are explicitly noted as heavily weighted sources. The median product recommended by Amazon Rufus has nearly 3,000 reviews. ChatGPT explicitly pulls from “community discussions and public websites.”

Why This Matters Doubly for Reviews

Here’s the fun part. The double-whammy that makes reviews especially important right now: they’ve always mattered for agentic commerce, and they’re now becoming critical for standard AI search queries too.

Agentic commerce has always treated review volume and sentiment as major signals. If an AI agent is tasked with “find me the best CRM under $100/month for a five-person team,” it pulls review data to rank its recommendations.

But now, reviews are also shaping informational AI answers. A user asking “what’s the best project management tool?” in ChatGPT is going to get an answer shaped by the same review signals. The line between agentic commerce and conversational AI search is blurring, and reviews are the throughline.

In the year of “bring 2016 back,” I have to admit that review generation coming back as a legitimate marketing strategy is a pretty insane coincidence.

What’s Next: Three Theories on Who Holds the Citation Crown

If the pattern holds (editorial, then social, then reviews) what comes after reviews? Or maybe the better question is: does anything come after reviews, or are we watching the new dominant source lock in?

I have three theories. None of them are mutually exclusive. And honestly, the fact that I’m not sure which one is right (a difficult thing for me to admit) is part of what makes this space so interesting right now.

Scenario 1: Review Sites Hold the Crown

Here’s the main factor in making the case for reviews keeping their dominance: human psychology. I already talked about this previously, but I have a pretty strong feeling about it (and yeah, I do see the irony in that statement).

Review platforms were actually one of the few places on the internet that bots couldn’t reliably infiltrate even before generative AI. The barrier has always been both technical and social. People who have strong feelings about a product (whether they be good or bad) are extremely motivated to share them. That passion is the engine that powers review platforms, and it’s not going away just because AI exists (if anything, I’d argue that people’s strong feelings towards anti-AI humanize it even more).

Think about it: when was the last time you were furious about a SaaS product’s customer support and thought, “nah, I’ll just let the AI write that review”? Exactly. The people who bother to leave reviews are usually the ones who care the most. That’s a self-selecting pool of genuine human sentiment that AI content just can’t replicate.

The threat here (and it’s a real one) is coordinated manipulation: brands incentivizing employees, customers, or paid reviewers to flood platforms with fake positivity. It’s not a new problem, but it becomes a much bigger one if review platforms become the de facto trusted layer for AI citations. Expect this to become a black hat service if it isn’t already. Watch for platforms like G2 and Capterra to double down on their verification infrastructure in response.

Scenario 2: Reddit Becomes the Last Trusted Human Space

Reddit is such a special case in my opinion that I think it deserves its own theory.

The thing about Reddit communities is that they are ruthlessly self-policing. Mods will ban you for posting something if they even catch a whiff of it being marketing. The community will eviscerate you in the comments if your take reads as inauthentic. There’s a culture of skepticism baked into how Reddit operates that makes it genuinely resistant to the kind of AI slop that’s washing over the rest of the internet.

That same culture (annoying as it can be if you’ve ever tried to legitimately participate in a community as a brand) might be Reddit’s structural advantage as an AI citation source. LLMs need sources that they can point to as “real human conversation.”

Reddit, for all its chaos, has one of the strongest claims to that label.

The interesting data point that backs this up: even when Perplexity’s Reddit citation share collapsed 86% after the lawsuit, Reddit’s absolute citation volume was still growing across other models. It was outgrown in Goodie’s first study. It briefly reclaimed the top social spot in January 2026 before YouTube pulled ahead again. Reddit keeps coming back. There miiiiiight be a reason for that beyond just access deals 👀

Scenario 3: The Whole Thing Equalizes

Okay, the third theory is actually the most boring one, but I’d argue it might also be the most likely: we’re just watching a system calibrate.

By all accounts, AI search is genuinely new. The models are figuring out, in real time, what kinds of sources produce the best answers for their users. Editorial was over-indexed first. Social was under-indexed, then rapidly corrected. Reviews are having their moment. It wouldn’t be crazy to think this is less of a “who wins” story and more of a “still finding equilibrium” story.

Google’s OG algorithm has been doing exactly this since the beginning: over-correcting on backlinks, then content freshness, then E-E-A-T signals, slowly grinding toward something more balanced. AI citation share is probably going to do the same thing.

This is the case against brands latching onto a trend and over-rotating on it before we actually know what’s what. This is my argument for diversification over optimization. Build a presence everywhere that matters and stay close enough to the data to notice when the weights shift.

The short-form video trajectory is worth watching in this context too. Goodie found that YouTube Shorts grew 624% from October to January 2026, with Instagram Reels up 248%. These are exaggerated metrics from small baselines, but if models get better at parsing short-form metadata and transcripts (cough, cough; they will) the gap between long and short form narrows. The Extractability Principle still holds for now; it may not hold forever.

How to Track AI Search Engine Citations

Okay, let’s get practical up in here. Because my proclamation that “the citation graph is shifting” is only useful if you can actually see what’s happening for your brand. Here’s how to approach it:

- Dedicated AEO platforms are the most comprehensive option. If you’re serious about AI visibility, you’re going to want a platform that can give you this kind of cross-model view, because as I’ve established, each model has a completely different citation fingerprint. For a broad look at the AEO tooling landscape, our roundup of top GEO tools is a solid starting point.

- Manual spot-checking is a decent starting point if you’re not ready to invest in a full-on platform for AEO. Just run your key product or category queries across whichever LLM(s) your audience favors, note which sources get cited, and look for patterns in what’s getting referenced.

- Google Search Console and brand monitoring can catch some downstream signals. If AI traffic starts appearing, you’ll see referral patterns shift. But this is lagging data; you’re seeing what happened, not what’s happening.

The key metric to watch is citation share by source type: how much of the content AI models pull from when talking about your brand or category comes from your owned site, earned press, social platforms, and review sites? And where are the gaps?

How to Increase AI Citations

This is the part everyone wants answered (even more so than “how to track it”) so let me be direct about it:

- Build on the platforms that feed the models your audience uses. Platform coupling means there’s no universal social strategy. If your buyers use Google’s AI surfaces for research, you’re gonna want to start making YouTube Long Videos. If they use ChatGPT, your Reddit and LinkedIn presence matter more than your Instagram.

- Make your content extractable. AI models don’t engage with content the way humans do. They parse it, chunk it, and extract claims. Long-form content with clear entity language (brand name, product name, category) stated explicitly, stable public URLs, and answer-first structures gets cited.

- And for the love of all that is good, please bring some originality to your content. If you have a theory, explain it. If you have an opinion, state it.

- Invest in reviews like it’s 2016. G2, Capterra, Trustpilot, and Reddit communities are becoming super important citation sources. Ask for reviews consistently, respond to the ones you get, and treat review volume as an AI visibility metric.

- Earn the press hits. Earned citations (news, authoritative reviews, and third-party writeups) still account for almost ¾ of all AI citations. Being mentioned in a Wirecutter roundup or a TechCrunch feature does more for your AI visibility than almost anything on your owned site.

- Stop treating social, SEO, and PR as separate teams. The citation graph doesn’t care about your org chart. The ~cool~ brands are already running social, SEO, content, and PR as one integrated system.

Getting Off of My Soap Box

I’ll be the first to admit that watching the citation graph shift in real time is equal parts exciting and exhausting. Just when you’ve built a strategy around one source type, the floor moves (sigh).

But there’s a throughline across all three phases. AI models are chasing the same thing humans have always chased when deciding who to trust: authenticity and credibility. Publishers had it first because of their track record. YouTube Long Videos earned it because they’re transcribed human speech. Reviews are holding it now because they’re some of the last corners of the internet where real people still show up with real opinions.

That pattern isn’t going to stop. Whatever comes after reviews will earn its place the same way. So instead of playing catch-up every time the graph shifts, the smarter move is building a presence that’s genuine everywhere it matters (earned media, social, reviews, owned) and monitoring closely enough to notice when the weights change before your competitors do.

AI Citation Graph: Frequently Asked Questions

What are AI citations?

In AI search, a citation is when a model references a specific source to support a part of its answer. Unlike a traditional search result (which just shows you links), AI citations are sources that have been actively integrated into the model’s response.

In short: being cited essentially means that the model in question has evaluated your content as credible enough to pull from.

Does my website still matter for AI citations?

It does, but it’s a smaller piece of the pie than you might think.

- Owned content (brand websites) accounts for roughly 1.7% of total AI citations

- Earned sources account for 72%

- Social accounts for 4.2%

Don’t get it twisted. Your site still matters (especially for establishing canonical product or brand information) but exclusive focus on owned content optimization is leaving significant citation share on the table.

Are AI citations the same across different AI models?

No, and this is one of the most important things to understand. Each AI model has a distinct “retrieval fingerprint”: characteristic patterns in where it sources information.

- Grok cites X almost exclusively.

- Google AI surfaces lean heavily on YouTube.

- ChatGPT and Claude both rely significantly on Reddit and LinkedIn.

If you optimize for one model or platform, you might be dropping visibility on another. See Goodie’s platform coupling study for the full model-by-model breakdown.

How are reviews different from regular content for AI visibility?

Reviews are increasingly valued as a signal of authentic human experience (something that’s hard to fake at scale on platforms with identity verification requirements).For agentic commerce specifically, review volume and sentiment carry significant weight (around 11% in Goodie’s 14-factor model). For informational queries, reviews on third-party platforms like Reddit function as community validation that AI models treat as credible evidence.

Can AI citations be gamed?

Technically, some tactics can influence citations, but the ones that work are also just good marketing.

- Creating high-quality, extractable content.

- Building a genuine presence on platforms the relevant AI models pull from.

- Earning press coverage and third-party mentions.

- Generating real reviews from real customers.

The citation graph is harder to game than SEO ever was because it focuses on whether the broader internet considers you a credible, frequently-referenced source. The shortcuts don’t really work here.

Do I need to be on every social platform?

No… and honestly, spreading yourself thin across platforms just to check boxes is a waste of time. The smart play is mapping which AI surfaces matter for your audience, then identifying which social platforms feed those surfaces.