Brands have been trying to read consumers minds for a century. They’ve measured people’s pulses in dimly lit rooms. They’ve eye-tracked shoppers in supermarket aisles. They’ve literally sorted through trash cans (the University of Arizona’s Garbology Project was based on the idea that people lie in surveys but their garbage doesn’t).

All of it is a workaround for the same problem. People don’t tell strangers what they actually think. Except, it turns out, when the stranger is ChatGPT.

There’s a running joke online that the thing people fear most isn’t death or public speaking, it’s their ChatGPT history getting out. That’s the joke. It’s also the insight. People tell these tools things they wouldn’t admit to a friend, a therapist, or a survey designer. And most brands aren’t paying attention.

What Is AI Market Research?

Traditional AI market research is already here. Platforms like Brandwatch, Quantilope, and Sprinklr have been baking machine learning into survey automation and social listening for years. AI makes them faster and better at parsing unstructured text, but they still rely on the same research paradigm we’ve had since Robert Merton invented the focus group for WWII propaganda research in 1941.

Basically it comes down to someone deciding what to ask, who to ask, and how to interpret the answers.

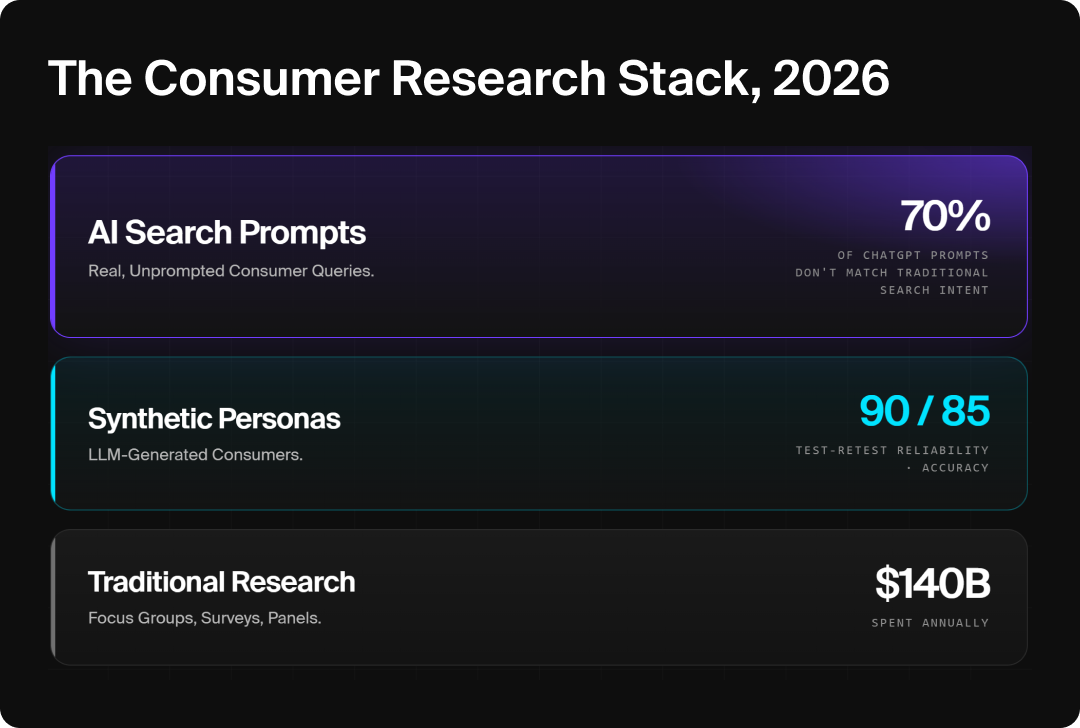

The Rise of Synthetic Personas

The bleeding edge of the space is something called synthetic personas. These are simulated consumers built entirely out of LLMs. The weird part is that they actually work.

The thing is, synthetic personas have real limits. They inherit the biases of their training data, they miss emotional nuance, and they can only be as good as the real conversations they learned from. Which is the irony nobody’s talking about: synthetic personas work because LLMs absorb an enormous volume of actual human discourse from Reddit, forums, reviews, social media and comment sections.

So why are brands building simulations when they could just go to the source?

The visual story: each tier got us closer to the real consumer. The top tier is where they already are.

The Pivot Most Brands Are Missing

Before AI search, the only way to know what a customer was thinking was to ask them directly. You paid a research firm to run a survey, ran a focus group, or interviewed customers one by one. The entire consumer insights industry was built around a single assumption: you had to ask.

That assumption began to fragment with the rise of social media but was completely destroyed with the transition to AI search.

AI search is the largest unprompted consumer research dataset in history. It’s real-time, voluntary, and there’s no researcher in the room. People are volunteering the exact detail, context, and emotion a survey designer would normally have to coax out of them, without being asked.

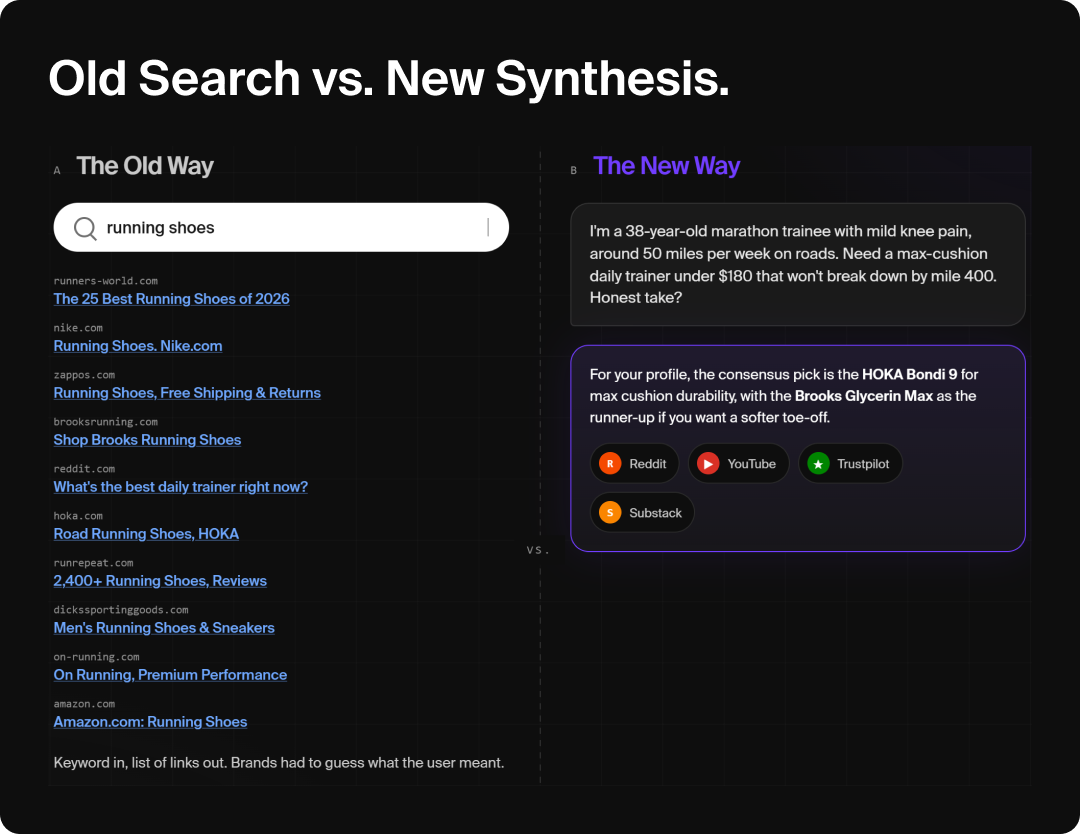

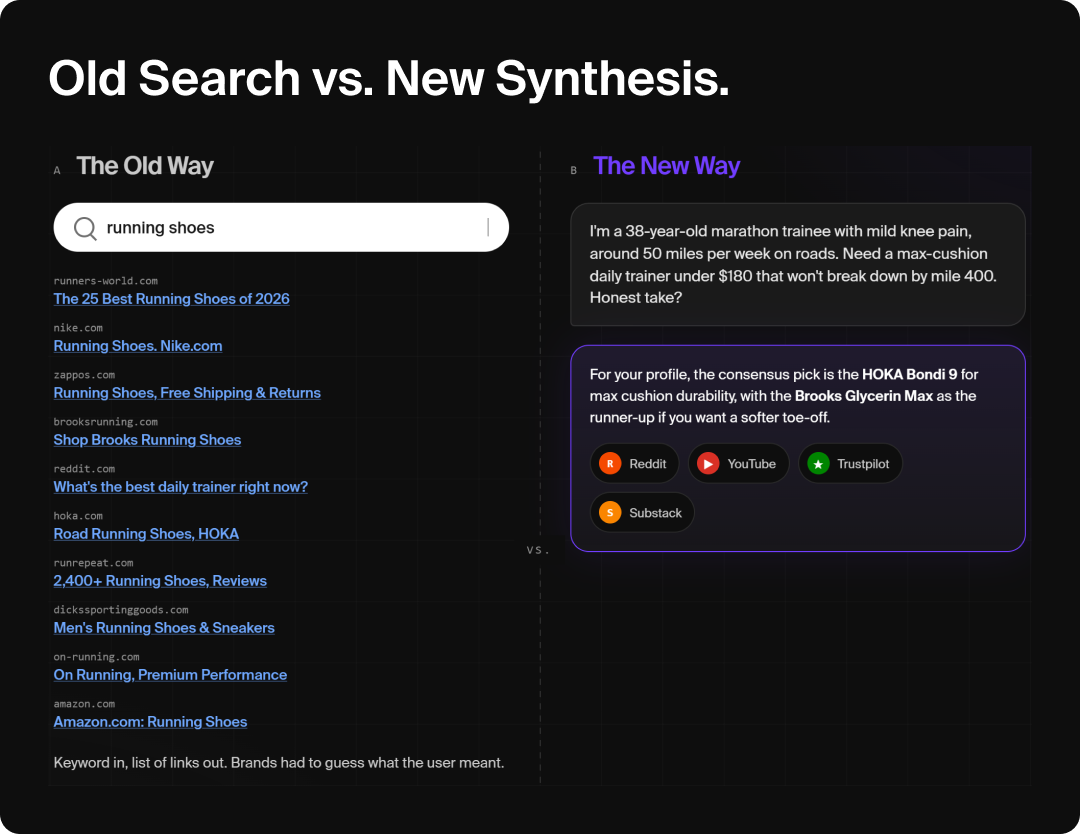

A shopper doesn’t type “women’s running shoes” into ChatGPT. They type, “I’m training for my first half marathon, my knees have been bothering me, and I need something that works for flat feet.” You now know the use case, the medical context, and the purchase readiness. None of this would appear in keyword data. None of this a consumer would volunteer on a survey without three follow-ups.

Per Goodie’s 2026 AI Search Report, the most-cited domains in AI answers skew heavily toward social and UGC platforms (Reddit, YouTube, Quora, LinkedIn, TikTok, Substack) because those are where the conversational, experience-based answers actually live. Your old keyword tool sees “running shoes.” It doesn’t see the full question, the context, or the sequence.

And it should worry anyone still relying on classical surveys that Stanford GSB’s 2024 research found roughly 1/3 of Prolific survey respondents admitted using LLMs to answer the surveys themselves. Even “authentic” human survey data isn’t coming from the human you are interviewing. Raw, unprompted social conversation is not.

Here’s what marketers caught on to quickly: people talk to LLMs the way they talk to friends.

They don’t search for “best CRM SMB.” They type, “Hey, we’re a five-person team and we hate Salesforce’s UI, what should we switch to?” They don’t search “coffee shops Los Angeles.” They ask Claude, “Where can I get a matcha in Weho that doesn’t taste like grass and that doesn’t have a line as long as Community Goods?”

That tone (conversational, specific, slightly emotional) is the exact tone people use on Reddit, in forums, in Facebook groups, in TikTok comments. Which is why LLMs pull so heavily from those platforms. The linguistic match is pretty close to perfect.

The Language of AI Search Is the Language of Forums

Society spent 25 years developing a “language” for Google: short keywords, direct queries. AI search is a fundamentally different thing. You cannot possibly create enough keyword-targeted pages to cover every microvariation in every consumer question (and you shouldn’t try, that would be dumb). Instead, the goal is to create content that answers a relevant question and any auxiliary questions so thoroughly that AI deems it good enough to scrape and synthesize.

Traditional sentiment tools were famously bad at this. A dashboard from 2019 might read “this brand is sick” and not know whether that was a compliment or a complaint (if you work at a brand literally called NoGood, thank your lucky stars that that got fixed). LLMs handle tone, irony, and slang in ways word-cloud generators couldn’t dream of. They parse how something is said, not just what’s said.

The Byproduct Framing

Here’s what matters: LLMs aren’t trying to do consumer research. They’re trying to give the best possible answer.

That makes the resulting insight inherently unbiased. It’s not designed to please a brand, confirm a hypothesis, or keep a client happy. It has the exact same goal as a brand doing consumer research which is providing something that actually fulfills customers wants and needs.

The consumer research is a byproduct of the answer, which is exactly what makes it more authentic than almost any traditional research method. It also helps that LLMs structurally favor content organized as Q&A, which is why Reddit, Quora, and community forums get cited so heavily. They’re literally formatted as one person asking a question and real people giving clear answers.

The Social Citation Explosion

AI isn’t just casually pulling from social. It’s increasingly dependent on it. Per Goodie’s Social Content Type Study, social content now drives a disproportionate share of AI citations, with YouTube overtaking Reddit as the dominant social source. Instagram and TikTok went from effectively zero citations to actively cited within a single month in late 2025. The chaotic and emotional, identity-driven content your old social listening tool flagged as “noise” is now one of the loudest signals shaping what AI tells your customers about you.

Google signed a reported $60M/year content licensing deal with Reddit specifically to train on it. Not an accident. Social listening is a foundational layer for LLM knowledge.

What Prompting Patterns Reveal About Consumer Decision-Making

Traditional search fragmented the consumer journey across dozens of sessions. Someone would Google “best CRM small team,” close the tab, come back two days later for “HubSpot vs Salesforce,” and eventually land on “HubSpot pricing 2026” ten days later. Analysts stitched a decision together from pixel fragments. AI search compresses that whole arc into a single conversation.

The Full Decision Psychology, in One Session

In one session you can see the entire reasoning chain:

- Primary prompt: “What’s the best CRM for a 7-person sales team?”

- Comparative follow-up: “How does HubSpot compare to Salesforce for onboarding?”

- Objection surfacing: “Has anyone had issues with HubSpot support for teams under 10?”

- Decision trigger: “HubSpot pricing for 2026 with Sales Hub included”

That’s a full consumer decision, sequentially, in thirty seconds of scrolling with the whole chain of reasoning right there.

Query Fan-Out Mirrors How Consumers Actually Think

Per Goodie’s breakdown of query fan-out, AI search engines don’t treat a prompt as a single question. They expand it into 5 to 15 sub-queries, run them in parallel, and synthesize the results. A prompt like “best running shoes for flat feet and plantar fasciitis” becomes a constellation: shoe reviews, podiatrist recommendations, plantar fasciitis management, arch support mechanics, brand-specific comparisons.

Mechanically, that’s how consumers actually think. Nobody has one question; they have a chain of related questions. Traditional search forced them to ask them separately one by one. AI search runs it in parallel.

Different LLMs Reveal Different Consumer Mindsets

People use different LLMs for different jobs, which means each one is a different research lens:

- ChatGPT skews toward comparative, rational, task-oriented queries.

- Claude attracts deeper research and more nuanced prompts (and penalizes content that makes claims without evidence).

- Google AI Overviews blend authority-heavy sources with community signals.

- Perplexity leans into source-cited research queries.

- Meta AI pulls directly from Instagram and Facebook.

- Grok pulls heavily from live X threads

Testing the same prompt across all of them is effectively running six focus groups.

Social content is highly exploratory, visual, and emotionally driven. You don’t go on TikTok to find out how many days there are until Black Friday. You go to see what kinds of sweaters the “it girls” are shopping for this fall, and then you absolutely do not buy that sweater; you buy something else because a different creator’s vibe hit harder at 11:47pm on a Tuesday.

That kind of identity-driven, unpredictable discovery is a fundamentally different consumer signal than a Google keyword. And yet all of it (the clicks, the saves, the shares, the comments) is being synthesized into crisp, factual answers by LLMs.

The Morning After the Group Chat

One way to think of it is that social content is the group chat on a night out. Emotional, chaotic, inside-joke-heavy, full of opinions and frankly often difficult to understand the next day. AI search is the morning-after debrief, where one friend pieces together the texts and screenshots and gives you the clean summary of what actually happened.

Both matter, but they carry different information. The group chat has the feeling. The debrief has the takeaway. LLMs are doing the debrief, at scale, across millions of group chats that you’re not in. Traditional social listening tools have been trying to produce that level of synthesis for fifteen years with sentiment scores and word clouds. LLMs do it with a synthesis quality traditional social listening tools can’t match.

Meta Is Closing the Loop

The line between social platform and AI search engine is disappearing… and nowhere faster than inside Meta. 64% of U.S. Gen Z now use social platforms as search engines, and every time someone searches inside Instagram or Facebook, Meta AI is pulling from those same Instagram posts, Reels, and Facebook groups to generate its answer.

Gen Z searches social. Meta AI synthesizes social. Users see results that are largely made of more social. Rinse, repeat.

The Feedback Loop Is the Real Story

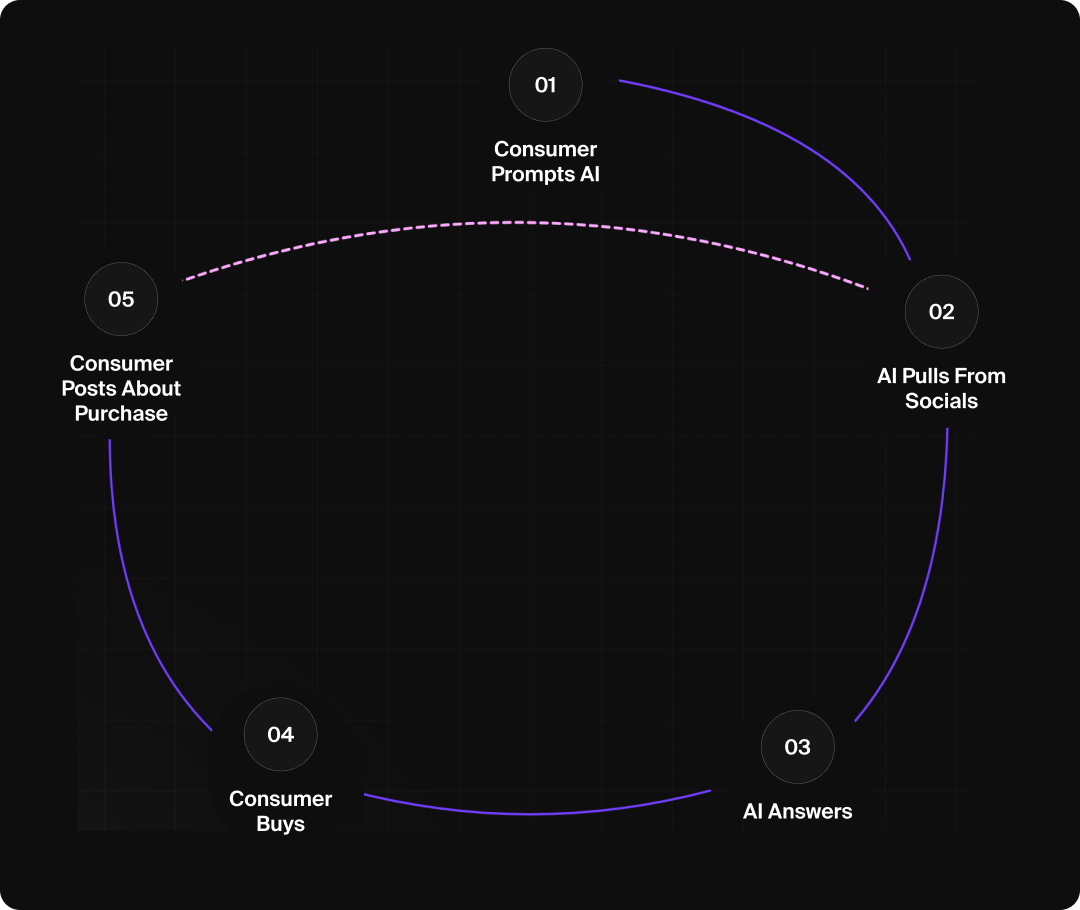

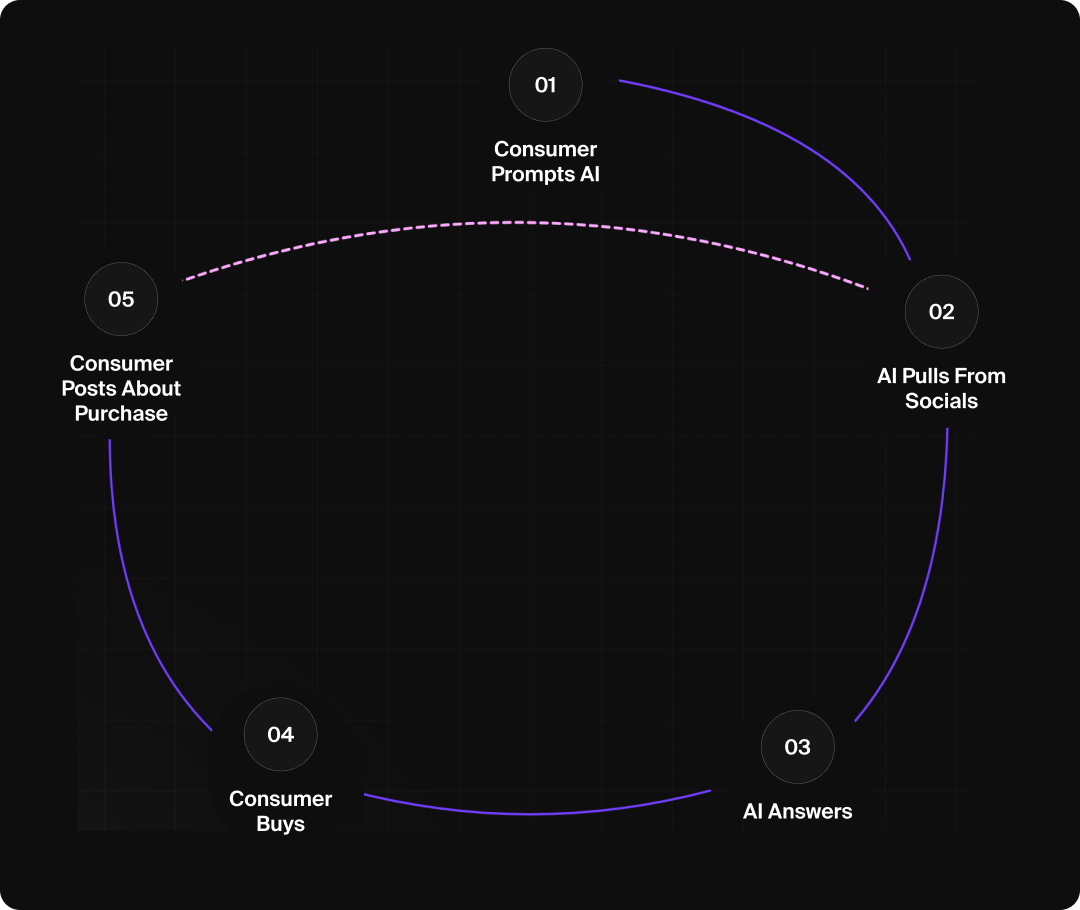

What consumers see in AI answers today is shaping what they buy tomorrow, and what they buy tomorrow becomes the training data for next quarter’s answers. The cycle: consumer prompts AI → AI pulls from social consensus → AI delivers an answer → consumer buys → consumer posts about it → that content feeds the next round of retrieval. Repeat.

AI search doesn’t just reflect what consumers want. It actively shapes what they want, and the cycle is tightening: Adobe’s data shows AI referral traffic doubling roughly every two months since September 2024.

Why AI Conversions Are So Much Higher

Rep AI’s analysis of Shopify stores found AI chat users convert at 12.3% vs. 3.1% for non-AI traffic, roughly 4x higher (and they check out 47% faster).

Those numbers aren’t a fluke. They’re evidence that AI is understanding customer wants and needs more precisely than any other discovery surface available right now. The customer arrives already having told the AI their use case, their constraints, their budget, and their objections. The AI then hands them three options that match.

So, of course the conversion rate is 4x. It’s the first channel in history where the consumer has effectively pre-qualified themselves out loud. If you want to understand what your customers actually want, this is where they’re telling you.

How to Use AI Search Insights for Consumer Research

Here’s the practical part. The version you can bring to your next Monday marketing meeting.

1. Monitor Your AI Visibility

Tools like Goodie let you see which sources AI platforms are actually citing when people ask about your category. Not just “are we showing up,” but “how are we positioned vs. competitors, and what are they doing that we aren’t?”

2. Track Prompt Journeys, Not Just Keywords

The sequence is the signal. If customers are asking “best X” then “X vs. Y” then “X pricing” then “has anyone had issues with X support,” that last prompt is doing more work than all the others combined.

Map the full sequence. Look at query fan-out to expand each prompt into 5 to 15 sub-queries. The sub-queries are where the unspoken objections live.

What people say about you on Reddit, in reviews, in TikTok comments, and in niche Discord servers IS what AI is reading and synthesizing. Treat those platforms as your always-on focus group. Even the mean comments. Especially the mean comments.

The deeper move is to track which specific citations keep showing up in AI answers about your category. Those citations are the knowledge LLMs are distributing to consumers on your behalf. If the same Reddit thread or YouTube video keeps getting pulled, that’s the thing shaping consumer perception of your brand right now.

4. Use Different LLMs as Different Research Lenses

Run prompts at scale for category questions across ChatGPT, Claude, Meta AI, Google AI Overviews etc. Each one surfaces a different slice of consumer psychology, from rational comparison to real-time cultural sentiment to social commerce behavior. This takes about twenty minutes a month and it’s effectively free.

5. Layer AI Search on Top of Traditional Tools

Don’t abandon surveys, social listening, or UX research. Traditional tools tell you what people say when asked. AI search tells you what people say when nobody’s asking. Given that 1/3 of survey respondents are now using LLMs to answer surveys anyway, your “clean” human data is getting contaminated and your raw social conversation isn’t.

6. Treat the Feedback Loop as a Leading Indicator

What AI tells consumers today shapes what they want tomorrow. If your brand isn’t cited in your category’s AI answers right now, you’ll see it in organic search trends, review sentiment, and purchase intent surveys six months from now. Monitor AI representation as a leading indicator, not a presence metric. Brands that wait are going to spend 2027 clawing back ground they gave up in 2026.

The Bottom Line

The Garbology Project I opened this article with proved something that consumer researchers have known for decades but never had a good solution for: people lie. Consistently. In predictable directions. Sober respondents claim to drink less alcohol than their garbage suggests. Dieters claim to eat less sugar than their garbage suggests. Everyone overstates their vegetable intake.

AI search is the first consumer research surface where they don’t lie, because they don’t know they’re being studied. They’re just asking a question and moving on. Your customers are already telling ChatGPT, Claude, Perplexity, and Gemini what they actually want, in their own words, with full context and no researcher in the room.

The question isn’t whether to use AI search as market research but is whether you’re listening.